It's time for an embedded systems design verification revolution

May 01, 2017

There have been very few successful revolutions in the electronic design automation (EDA) market segment. The last was probably the migration from gat...

There have been very few successful revolutions in the electronic design automation (EDA) market segment. The last was probably the migration from gate-level schematic design to language-based register transfer-level (RTL) design. This involved a new methodology and new languages, both of which have evolved over time. There have been several attempts to create other revolutions, such as the migration to the electronic system level (ESL), but these have fizzled in general because evolutionary approaches present a decent and less risky alternative.

A revolution is building right now in the verification space. Remember when everyone graduated from college and wanted to be a design engineer? Those who were not good enough got moved onto verification. Those days are over and some are suggesting that today being a verification engineer carries as much, and in some cases more, prestige. Verification tools are now about to take a page out of the design flow playbook. Who knows where this will lead the industry.

Design always relies on abstraction. Functional design ignores many details about how things happen, instead relying on libraries and synthesis to go from abstract notions to an implementation. Yes, a lot of other stuff happens along the way, but most of it was kept from the designer. In verification, the verification team’s primary focus is on stimulus. Sure, the languages and methodologies have evolved to make stimulus more productive, and constrained random test pattern generation was a large transformation from directed test to one where verification teams invested time creating a model for the stimulus rather than directly creating the stimulus. This transformation has allowed us to stay with the same verification methodology for over 20 years, but it is reaching the tail end of its lifecycle.

Design today relies on reuse and packaged intellectual property (IP), but verification has not benefitted from this trend in the same manner. Most chips are at least 90 percent reuse and utilize tens or hundreds of IP blocks. While there are some blocks of verification IP, they have not benefited verification in the same way because there is no assembly methodology for verification and not much in the way of abstraction.

All that will change with the development of the Accellera Portable Stimulus standard based on over a decade’s worth of market-driven experience from Breker Verification Systems and Mentor, a Siemens Business. Each company developed tools in this area and honed technologies during this period. Now it’s time for them to come together and merge this experience, which is indeed what’s happening.

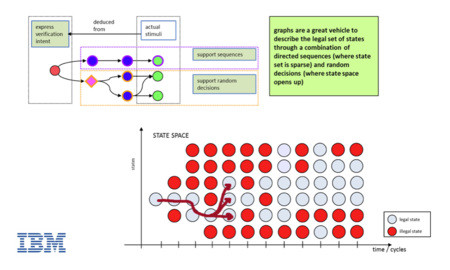

At the heart of this new standard are graph-based models. Graphs are something that everyone understands in both the hardware and software communities. This is not the revolution. The revolution is that the graphs are a model of verification intent. It does not define stimulus – it is a model of what should be verified (Figure 1). A synthesis tool, much the same as for hardware design, reads that model and synthesizes a test case that targets some objective.

[Figure 1 | Portable stimulus enables a graph-based verification approach where users are able to generate stimuli from a graph. Source: IBM, from DVCon India 2015 User Track Presentation.]

Instead of randomly wiggling inputs in the blind hope that it does something useful, the user can define the user-level goal and have the tool generate a test that targets that specific goal. The model still contains multiple ways in which that goal can be accomplished, and the tool continues to leverage the randomization approaches that have been both popular and successful. It also continues to leverage the existing verification infrastructure of bus functional models to interface with the design. What it adds is an efficient method of generating system-level tests beyond the scope of existing verification methodologies.

Another benefit of this approach is that, because a synthesis tool is involved, it knows what needs to be different between a test run on a simulator versus the same test for an emulator versus the same test for real silicon. This will unify verification across the entire design flow from IP verification and system verification to silicon bring up. In addition, the necessary layers are being inserted that allow software verification to be included as well. When software drivers are ready they can be easily inserted rather than using the more general information contained in the model. The same goes for protocol stacks or other software layers.

The standard includes notions of assembly and reuse. When a piece of design IP is purchased, the user should expect to get the verification model for that component as well, which will be placed directly into the higher-level verification model. Constraints can be placed on the graph to prohibit certain functionalities from being targeted, and coverage information that results from running the generated tests can be annotated onto the graph to provide a direct measure of progress.

We are looking at a paradigm shift in verification methodology that progresses with the design throughout a flow. It brings the software team into the picture, incorporating notions of integration and reuse that make all aspects of the verification flow look a lot more like design. This will result in totally new verification methodologies being developed and rolled out over the next two decades, and make the process of silicon realization more efficient and effective. We may also see more people want to become verification engineers when they graduate. That is a revolution that everyone can be happy about.

Breker Verification Systems

www.brekersystems.com

@BrekerSystems

LinkedIn: www.linkedin.com/company-beta/1010418

Facebook: www.facebook.com/BrekerSystems

Google+: plus.google.com/107630306780826149597

YouTube: www.youtube.com/user/BrekerSystems