Open Standards for Accelerating Embedded Vision and Inferencing: An Industry Overview

January 23, 2020

Story

The ever-advancing field of machine learning has created new opportunities for deploying devices and applications that leverage neural network inferencing.

The ever-advancing field of machine learning has created new opportunities for deploying devices and applications that leverage neural network inferencing with never-before-seen levels of vision-based functionality and accuracy. But, the rapidly evolving field has given way to a confusing landscape of processors, accelerators, and libraries. This article treats open interoperability standards and their role in reducing costs and barriers to using inferencing and vision acceleration in real-world products.

Every industry needs open standards to reduce costs and time to market through increased interoperability between ecosystem elements. Open standards and proprietary technologies have complex and interdependent relationships. Proprietary APIs and interfaces are often the Darwinian testing ground and can remain dominant in the hands of a smart market leader and that is as it should be. Strong open standards result from a wider need for a proven technology in the industry and can provide healthy, motivating competition. In the long view, an open standard that is not controlled by, or dependent on, any single company can often be the thread of continuity for industry forward progress as technologies, platforms and market positions swirl and evolve.

The Khronos Group is a non-profit standards consortium that is open for any company to join with over 150 members. All standards organizations exist to provide a safe place for competitors to cooperate for the good of all. The Khronos Group’s area of expertise is creating open, royalty-free API standards that enable software applications libraries and engines harness the power of silicon acceleration for demanding use cases such as 3D graphics, parallel computation, vision processing and inferencing.

Creating Embedded Machine Learning Applications

Many interoperating pieces need to work together to train a neural network and deploy it successfully on an embedded, accelerated inferencing platform – as shown in figure 1. Effective neural network training typically takes large data sets, uses floating point precision and is run on powerful GPU-accelerated desktop machines or in the cloud. Once training is complete, the trained neural network is ingested into an inferencing run-time engine optimized for fast tensor operations, or a machine learning compiler that transforms the neural network description into executable code. Whether an engine or compiler is used, the final step is to accelerate the inferencing code on one of a diverse range of accelerator architectures ranging from GPUs through to dedicated tensor processors.

Fig 1. Steps in training neural networks and deploying them on accelerated inferencing platforms

So, how can industry open standards can help streamline this process? Figure 2. Illustrates Khronos standards that are being used in the field of vision and inferencing acceleration. In general, there is increasing interest in all these standards as processor frequency scaling gives way to parallel programming as the most effective way to deliver needed performance at acceptable levels of cost and power.

Fig 2. Khronos standards used in accelerating vision and inferencing applications and engines

Broadly, these standards can be divided into two groups: high-level and low-level. The high-level APIs focus on ease of programming with effective performance portability across multiple hardware architectures. In contrast, low-level APIs provide direct, explicit access to hardware resources for maximum flexibility and control. It is important that each project understand which level of API will best suit their development needs. Also, often the high-level APIs will use lower-level APIs in their implementation.

Let’s take a look at some of these Khronos standards in more detail.

SYCL - C++ Single-source Heterogeneous Programming

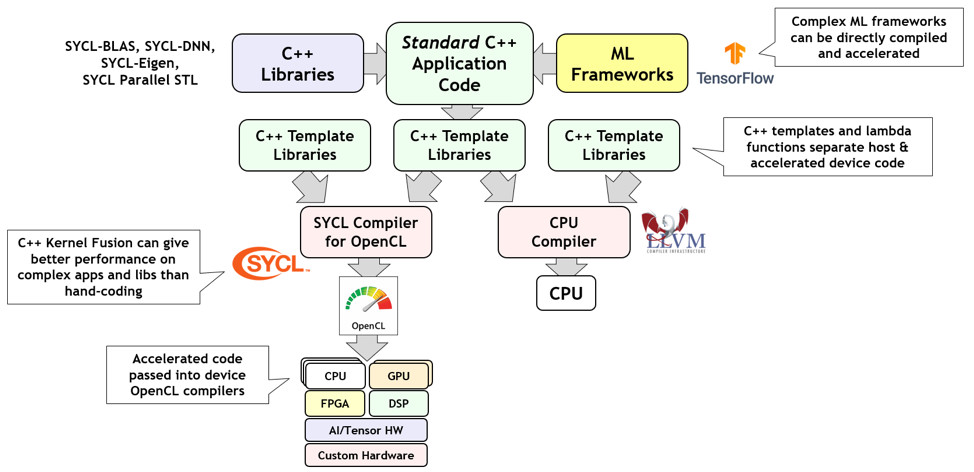

SYCL (pronounced ‘sickle’) uses C++ template libraries to dispatch selected parts of a standard ISO C++ application to offload processors. SYCL enables complex C++ machine learning frameworks and libraries to be straightforwardly compiled and accelerated to performance levels that in many cases outperform hand-tuned code. As shown in figure 3., by default, SYCL is implemented over the lower-level OpenCL standard API: feeding code for acceleration into OpenCL and the remaining host code through the system’s default CPU compiler.

Fig 3. SYCL splits a standard C++ application into CPU and OpenCL-accelerated code

There are an increasing number of SYCL implementations, some of which use proprietary back-ends, such as NVIDIA’s CUDA for accelerated code. Significantly, Intel’s new oneAPI Initiative contains a parallel C++ compiler called DPC++ that is a conformant SYCL implementation over OpenCL.

NNEF – Neural Network Exchange Format

There are dozens of neural network training frameworks in use today including Torch, Caffe, TensorFlow, Theano, Chainer, Caffe2, PyTorch, and MXNet and many more—and all use proprietary formats to describe their trained networks. There are also dozens, maybe even hundreds, of embedded inferencing processors hitting the market. Forcing that many hardware vendors to understand and import so many formats is a classic fragmentation problem that can be solved with an open standard as shown in figure 4.

Figure 4. The NNEF Neural Network Exchange Format enables streamline ingestion of trained networks by inferencing accelerators

The NNEF file format is targeted at providing an effective bridge between the worlds of network training and inferencing silicon – where Khronos’ proven multi-company governance model gives the hardware community a strong voice on how the format evolves in a way that meets the needs of companies developing processor toolchains and frameworks, often in safety critical markets.

NNEF is not the industry’s only neural network exchange format, ONNX is an open source project founded by Facebook and Microsoft and is a widely adopted format that is primarily focused on the interchange of networks between training frameworks. NNEF and ONNX are complementary as ONNX tracks rapid changes in training innovations and the machine learning research community, while NNEF is targeted at embedded inferencing hardware vendors that need a format with a more considered roadmap evolution. Khronos has initiated a growing open source tools ecosystem around NNEF, including importers and exporters from key frameworks and a model zoo to enable hardware developers to test their inferencing solutions.

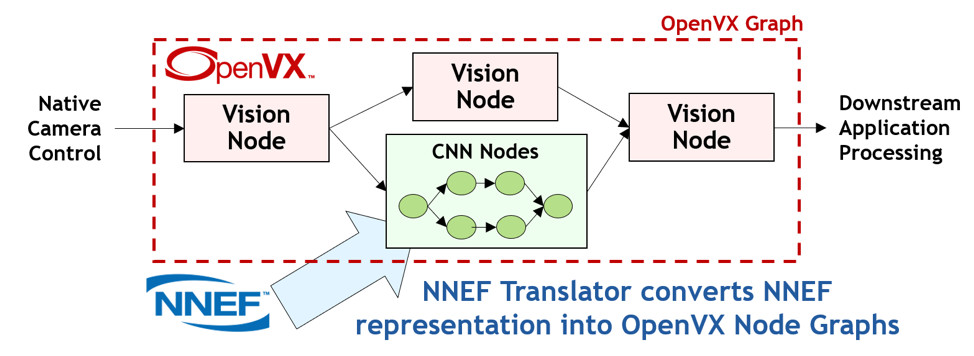

OpenVX – Portable Accelerated Vision Processing

OpenVX (VX stands for ‘vision acceleration’) streamlines the development of vision and inferencing software by providing a graph-level abstraction that enables a programmer to construct their required functionality through connecting a set of functions or ‘Nodes’. This high-level of abstraction enables silicon vendors to very effectively optimize their OpenVX drivers for efficient execution on almost any processor architecture. Over time, OpenVX has added inferencing functionality alongside the original vision Nodes - neural networks are just another graph after all! There is growing synergy between OpenVX and NNEF through the direct import of NNEF trained networks into OpenVX graphs as shown in figure 5.

Figure 5. An OpenVX graph can describe any combination of vision nodes and inferencing operations imported from an NNEF file

OpenVX 1.3 was released in October 2019 and enables carefully selected subsets of the specification that are targeted at vertical market segments, such as inferencing, to be implemented and tested as officially conformant. OpenVX also has a deep integration with OpenCL that enables a programmer to add their own custom accelerated Nodes for use within an OpenVX graph – providing a unique combination of easy programmability and customizability.

OpenCL – Heterogeneous Parallel Programming

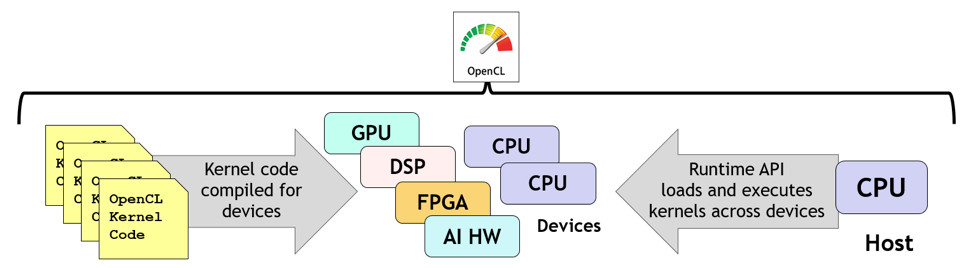

OpenCL is a low-level standard for cross-platform, parallel programming of diverse heterogeneous processors found in PC, servers, mobile devices and embedded devices. OpenCL provides C and C++-based languages for constructing kernel programs that can be compiled and executed in parallel across any processors in a system with an OpenCL compiler, giving explicit control over which kernels are executed on which processors to the programmer. The OpenCL run-time coordinates the discovery of accelerator devices, compiles kernels for selected devices, executes the kernels with sophisticated levels of synchronization and gathers the results as illustrated in figure 6.

Figure 6. OpenCL enables C or C++ kernel programs to be compiled and executed in parallel across any combination of heterogenous processors

OpenCL is used pervasively throughout the industry for providing the lowest ‘close-to-metal’ execution layer for compute, vision and machine learning libraries, engines and compilers.

OpenCL was originally designed for execution on high-end PC and supercomputer hardware, but in a similar evolution to OpenVX, processors needing OpenCL are getting smaller, with less precision, as they target edge vision and inferencing. The OpenCL working group is working to define functionality tailored to embedded processors and to enable vendors to ship selected functionality targeted at key power- and cost-sensitive uses cases with full conformance.

About the Author:

Neil Trevett is Vice President of Developer Ecosystems at NVIDIA where he helps enable applications to take advantage of advanced GPU and silicon acceleration. Neil is also the elected President of the Khronos Group, where he initiated the OpenGL ES standard used by billions worldwide every day, helped catalyze the WebGL and glTF projects to bring interactive 3D graphics to the Web, fostered the creation of the OpenVX standard for vision and inferencing acceleration, and chairs the OpenCL working group defining the open standard for heterogeneous parallel computation. Before NVIDIA Neil was at the forefront of the silicon revolution bringing interactive 3D to the PC, and he established the embedded graphics division of 3Dlabs to bring advanced visual processing to a wide range of non-PC platforms.