Reducing bugs in hardware design with EDA and formal verification technology

May 01, 2014

Complex SoCs are often behind schedule or require re-spins due to bugs not caught by verification. In order to meet hardware design challenges, and im...

Hardware design has never been easier. Long gone are the days when hardware designers were hand-designing Integrated Circuits (ICs) using transistors and logic gates.

Instead, most designs today start at the Electronic System Level (ESL) or Register Transfer Level (RTL) using high-level description languages such as C/C++, System C, System Verilog, Verilog, and VHDL. Designing in these languages, while embodying hardware design constructs and characteristics, is not that different from writing software code using C, C++, or any other languages.

The job of interpreting and mapping these high-level design descriptions expressing the functional behavior of the intended hardware rests upon the set of Electronic Design Automation (EDA) tools. It starts with high-level synthesis, synthesis, place and route, and all the backend processes that lead to actual silicon. EDA in the last few decades liberated hardware designers to think and design at a higher level than possible before. This sounds easy.

However, hardware design has never been more difficult. Moore's Law has pushed the size of IC design to more than 100 million gates and pushing toward 1 billion gates. The hardware design starts from a complex piece of software system before mapping to the actual physical form.

Hardware design challenges

Not only do hardware designers need to understand hardware design principles and requirements, they also need to be good software programmers who can write efficient and high-quality code. The challenge is also magnified by the fact that these complex “software” systems need to take into consideration physical requirements, such as area, performance and power consumption – something pure software systems may not be concerned with.

Ever-increasing design sizes coupled with the complex transformation processes following front-end hardware design create tremendous challenges for hardware designers.

The heart of all these challenges is ensuring the design meets the specification. After all, a piece of silicon is useless when there are bugs in it and it does not do what the user intended it to do in the system. A simple missed bug could make or break a company or a key project in a highly competitive market. While writing hardware design code in high-level languages is a complex and time-consuming task, the time spent in verification consumes more than half of the project cycle. The general rule of thumb holds that a good designer can write about 5,000 lines of original RTL code a month.

Verification challenges

While designing is getting harder, verification is getting worse.

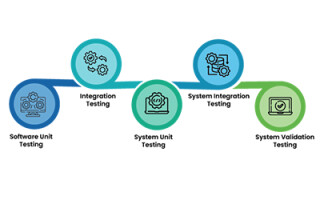

The typical verification process starts with designers doing some basic verification on their blocks before tossing them across the wall to the verification team for subsystem and chip-level verification. Simulation is the default verification tool whereby users provide input stimulus to the Design-Under-Test (DUT) and observe behaviors at the output from the simulator to see if they match intended design behaviors. Formerly, when the design was fairly small – about 10 years is considered old in this industry – simulation worked relatively well to catch most design bugs.

Debugging was not too difficult either because designs were small. However, the scale tipped at System-on-Chip (SoC) designs with multicore processors, large on-chip memory and interfaces to many peripherals. Today, 56 percent of designs contain two or more embedded processors[1].

Verifying such complex SoCs is difficult as manifested by the fact that only 30 percent of design projects achieve first silicon success. For the remaining 70 percent, logic/functional bugs are major contributors to chip flaws that cause re-spins[1]. It is not surprising that two-thirds of all projects are behind schedule as project teams try to find the last bug.

Improving verification methods

Verification needs improvement to meet hardware design challenges, improve quality and efficiency. Many techniques and methodologies have been proposed over the years, including constrained random simulation, code and functional coverage, and assertion-based verification. Big-box emulators come to the rescue so that more cycles can be churned within the tight project schedule. These have helped and are necessary, but there is an easier solution.

Looking at the time spent in verification, 36 percent is in debugging, 23 percent in creating and running tests, 22 percent in testbench development, and 16 percent in test planning[1]. Debugging time is the longest, which suggests that there are plenty of bugs in the design and points to a deficiency in the verification approach.

Most simulation is done at the subsystem and chip levels. Debugging at this level is hard because of the size of the DUT and the long simulation sequences, which could require a thousand or a million cycles to trace through to find the bug. It is not uncommon to hear that a particular bug took weeks if not months to debug. No company can afford wasting time like that.

The solution is simple – the block-level design quality must be improved to avoid finding bugs later in the project cycle. Simple bugs that could be found at the block level can turn into nasty bugs that take a long time to find at the subsystem or chip level. For block-level verification, nothing beats formal verification technology in finding corner case bugs and improving design quality in the most efficient manner.

Formal verification technology

Formal verification technology offers an advantage that's not found in simulation. It is exhaustive because it covers the entire input and state spaces automatically by virtue of its algorithm so designers and/or verification engineers don't have to spend time coming up with or missing design scenarios to verify.

When a bug is found, the tool will automatically generate a violation trace with the shortest path from reset to show where the bug is. Because of these characteristics, using formal technology for block-level verification offers improved design quality. No bug will be left behind and verification efficiency is improved because debugging will be easier and takes less time. With higher quality design blocks, the verification tasks at the subsystem and chip level will be reduced.

While formal technology is powerful, it has not been widely adopted for a couple of reasons:

· Not all blocks are good candidates for formal verification

· Conducting exhaustive search automatically is easier said than done. As a result, the tool often comes back with inconclusive results, leaving users unsure of what to do

Despite these challenges, certain forms of formal usages have been slowly adopted by the industry over the last decade. They include:

· Automatic formal to check for simple RTL properties to ensure there is no dead code, state machine deadlock or pragma violations

· Formal applications (apps) that target specific domains for verification, such as connectivity checks, clock domain crossing verification, or low-power verification

· Assertion-based verification where assertions written are used in simulation and in formal to find design bugs

Another use is formal verification sign-off, called End-to-End formal (Figure 1). In this use model, formal verification is not limited to verifying local or interface assertions, but rather checkers cover complete functionality of the block, from the input end to the output end. As such, formal verification is accountable for finding all the bugs in the design, and no simulation is needed for the block when formal is applied in such manner.

Finding all the bugs is the Holy Grail of any verification task. To achieve this seemingly impossible goal with End-to-End formal requires careful formal test planning, in depth knowledge of the design, thoughtful execution and creative minds to overcome formal complexity challenges. The solution is not just an EDA tool written as a piece of software, but a methodology that embodies the advances of formal technology written as software and the knowledge and experiences of the verification team.

The formal sign-off methodology incorporates four components (Figure 2):

1. End-to-End checkers that cover the entire functional space of the design

2. Constraints that dictate the environment the in which block operates

3. The complexity and, most importantly, techniques to overcome complexity, such as Abstraction Models and Bounded Proofs

4. Coverage to measure the quality of work

With a systematic approach to applying formal verification for sign-off, block-level design quality is improved and verification efficiency is increased. At the sub-system and chip level, energy and effort can be spent chasing bugs that lie in the interaction between blocks, not within blocks where the bulk of design functionality resides.

References

[1] 2012 Wilson Research Survey Results

Oski Technology