Testing is the only way to assure that code is correct

March 13, 2017

As systems in industrial, automotive, medical, and energy markets that involve human life and limb are connected to the IoT, the stakes get higher and...

As systems in industrial, automotive, medical, and energy markets that involve human life and limb are connected to the IoT, the stakes get higher and the pressure for safety and reliability increases. While hardware can be physically isolated and protected, once the system is connected to the Internet, it becomes exposed through software, which forms the “soft underbelly” of the IoT. And if they’re not secure, they can’t be considered reliable or safe. That means the battle for safe and secure devices takes place on the field of software.

Producing safe and secure code has a number of dimensions. On one level, code that’s functionally correct—it does what it’s supposed to do—can still contain openings that a hacker can take advantage of. On another level, the code must be functionally safe in that it follows rules to prevent injury or damage, and it must be functionally secure in that it contains mechanisms such as encryption that prevent access.

We’re making significant progress along these lines with coding standards such as MISRA and CERT C for correct coding practices and industry specifications such as ISO 26262 for automotive and IEC 62304 for medical. Following guidelines such as these is one thing, but code must be verified to be sure all the detailed rules have been followed and that can only be done by thorough analysis and testing.

A comprehensive set of validation and testing tools is essential to such validation, and the better integrated it is to the other software tools and the particular industry segment being developed, the better. Safety and security must begin at the ground level starting with the RTOS and drivers on up to the final application. Requirements-based testing and verification must be done at the system level and at the same time, robustness and more focused analysis and testing must be performed at the unit level.

The ability to trace from high-level requirements to source code and back is done by lifecycle traceability tools. This traceability provides both impact analysis capability as well as transparency and visibility into the software development lifecycle. Static analysis tools, used during the coding phases, can analyze software for quality, eliminating code vulnerabilities prior to compilation. This saves time and money by not letting code-level quality issues propagate into the executables and the integrated units.

Static analysis tools can also help ensure that the code follows a particular coding standard, ensuring clarity and consistency and eliminating code-level vulnerabilities. Static analysis can serve as the basis for automatic test case generation as it “understands” the code’s complexities and dependencies.

Coverage analysis, another key quality-analysis capability, provides a measure of the effectiveness of the testing process, showing what code has and has not been executed during the testing phases. All these capabilities should be integrated to expedite the path through software development and verification while providing transparency into the process that may be required by quality groups or regulatory authorities.

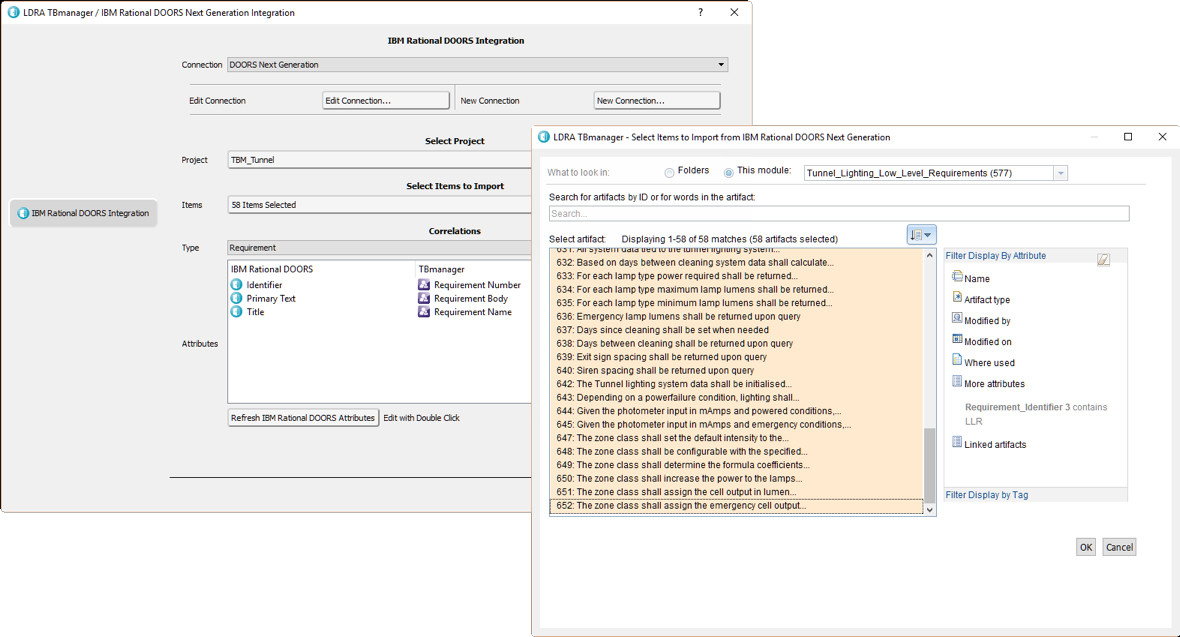

The IBM Rational DOORS system manages requirements for entire projects such as these for the lighting in a large tunnel project. A number of these requirements link to software requirements, which the integrated LDRA tool suite can now test down to the source code.

As standards, specifications and verification technologies progress, it’s important that tools can be upgraded with add-on packages that provide enhanced security techniques. The tool suites themselves are now starting to have versions focused on major industry segments, such as ISO 26262 for automotive. Development packages with sophisticated editors, debuggers, and performance tools can be integrated with verification tools, allowing customers to do development and testing in a single user environment. General industrial tools such as the IBM DOORS suite, which cover mechanical and other requirements along with software, can also gain an advantage by integrating in-depth software requirements traceability.

Measuring the effectiveness of the testing process as a whole is critical to developing high-assurance software. Understanding where tests need to be strengthened and where gaps in the testing process exist is fundamental to improving the overall process and quality of the code, and this need increases as tools become more focused on an application area. Therefore, leveraging techniques and technologies—such as coverage analysis with requirements traceability, static analysis and automated testing—can save both time and money by identifying potential vulnerabilities and weaknesses in the code early and throughout the software development lifecycle.